When and How

The discussions are hosted online in Lark/Wechat.

- Lark is our primary communication channel.

- Join the group using

- Join the group using

- Wechat is mostly for our backup plans.

If you would like to be part of the party, please create a post here on GitHub discussions.

The discussions are mostly in Chinese.

When

This is a bi-weekly meetup.

There are two different ways to keep track of the upcoming events:

- add this ics to your calendar.

- Calendar ics url: Add this ICS to your calendar app to follow the upcoming events.

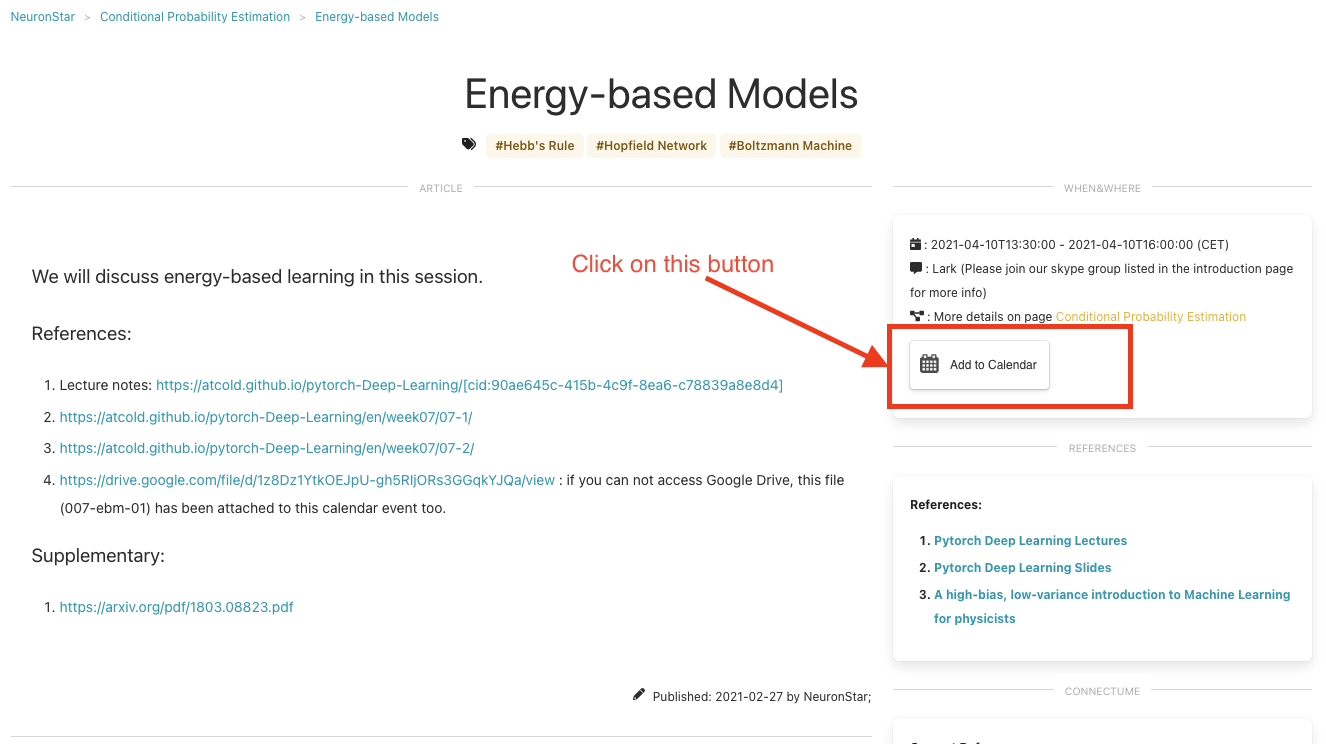

- If you would like to add individual events by yourself, use the “Add to Calendar” button on the specific event page.

- Here is the button:

- Here is the button:

As a preview of the events, here is a calendar web page for the upcoming events (Calendar Page):

Rules

- Everyone shall get their chance to lead the discussion.

- The first principle is to understand the content. Interrupt and ask any questions to make sure we all understand the content well.

Why this Topic

Conditional probability estimation is one of the most fundamental problems in statistics.

- Conditional probability estimation is frequently used in solving both real life and academic problems. One is likely to encounter this problem at some point of their life.

- If you are inferring, you are probably using conditional probabilities. It is a perspective.

- There are many models and methods to estimate the conditional probability. We can learn about and from these models and methods.

- We need a universal model to solve this problem for productivity. A universal model for this task will save us a lot of time and energy.

- Many machine learning methods are based on conditional probabilities.

- Many classifiers

- Bayesian networks

- …

What is Our Approach

- Read and Discuss

- Apply on toy problems

Reading List and References

We will update this list on our way forward. Here is a partial list of references.

Initial Proposal (Outdated)

As a start this is an outline of what should be covered.

- What is the conditional probability?

- Sampling theory

- Bayes

- Representation of a conditional probability

- Statistical methods to estimate the conditional probability

- The list is enormous. We will only concentrate on the basics.

- Tree-based

- Tree as “clustering” method

- Application on the bike-sharing problem

- NN-based

- NN as feature transformations

- Application on the bike-sharing problem

- EM Methods

- Variational Methods

- Normalizing Flow

- To be added as we learn more about it

oy Problems (Outdated)

We have prepared dataset that can be used both for classification problems and regression problems.

Tools

- Timezone conversions: World Clock